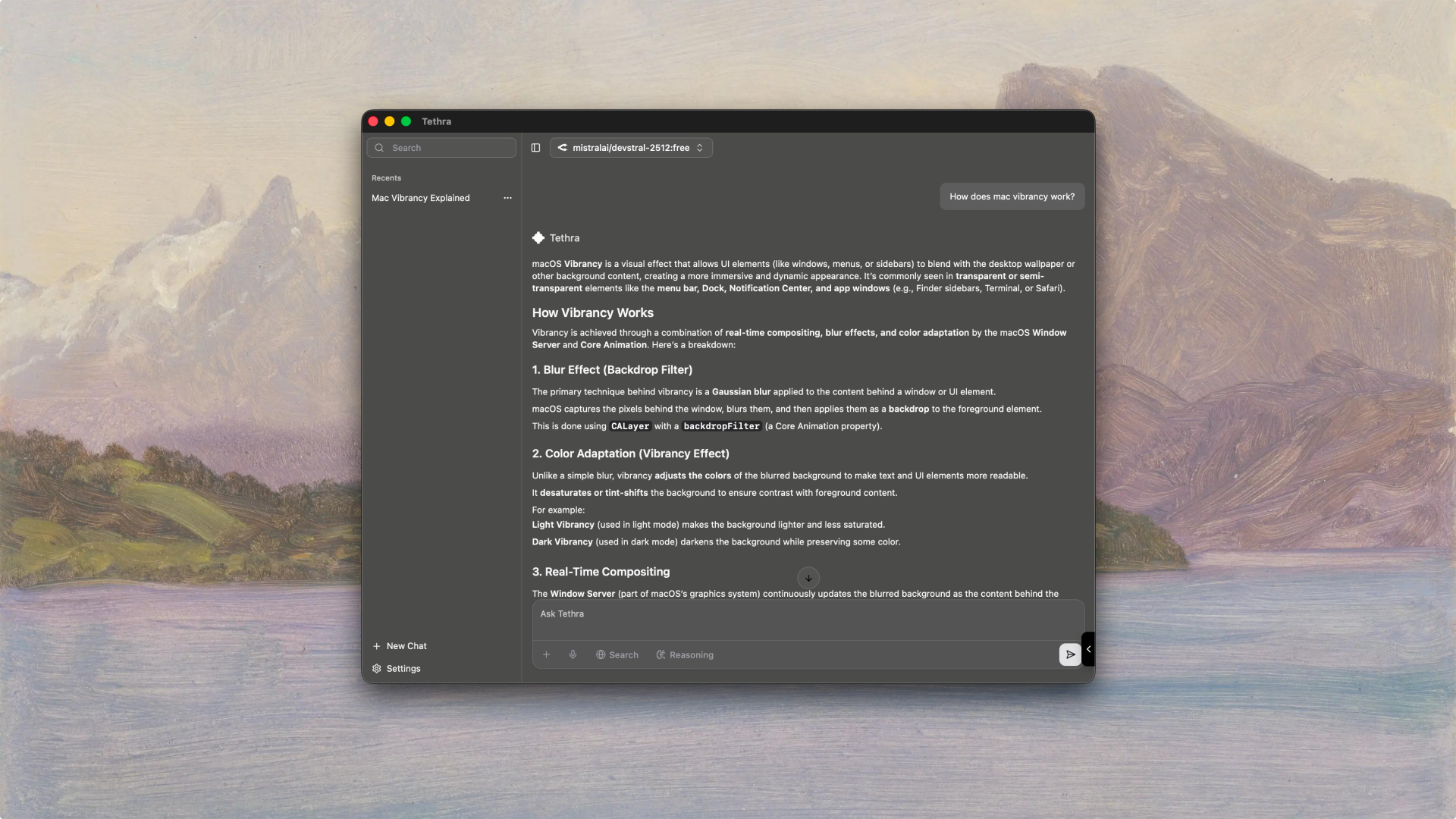

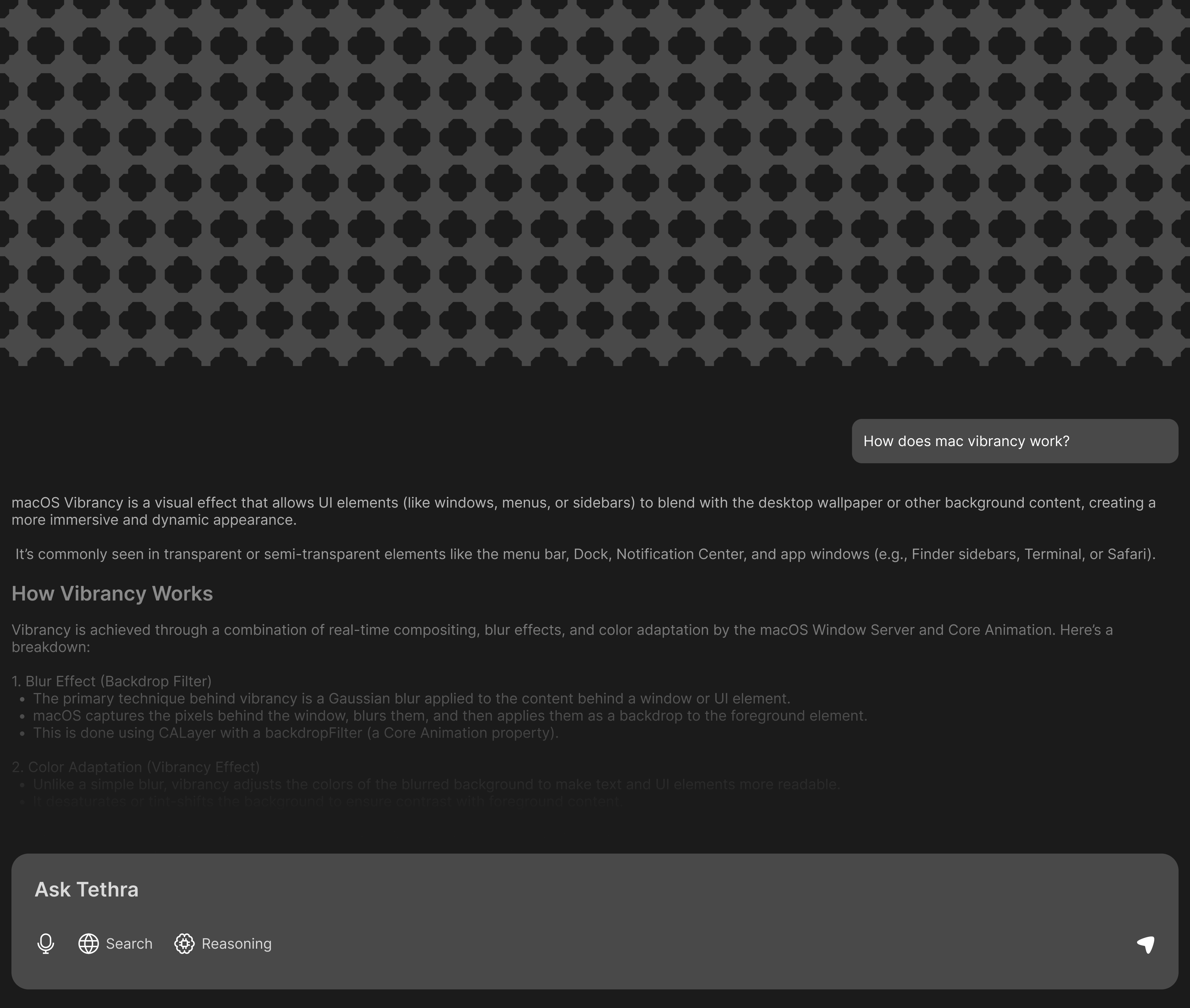

The problem with how we use AI today:

I was doing a lot of AI-assisted work across research, coding, and writing.

Every provider has something different to offer — GPT-4o is solid for

coding, Claude is great for writing, Gemini handles long contexts well.

But switching between them means losing history, losing context, and

constantly re-explaining where you left off. Web interfaces also mean

your conversations are stored on someone else's server by default,

which I wasn't thrilled about.

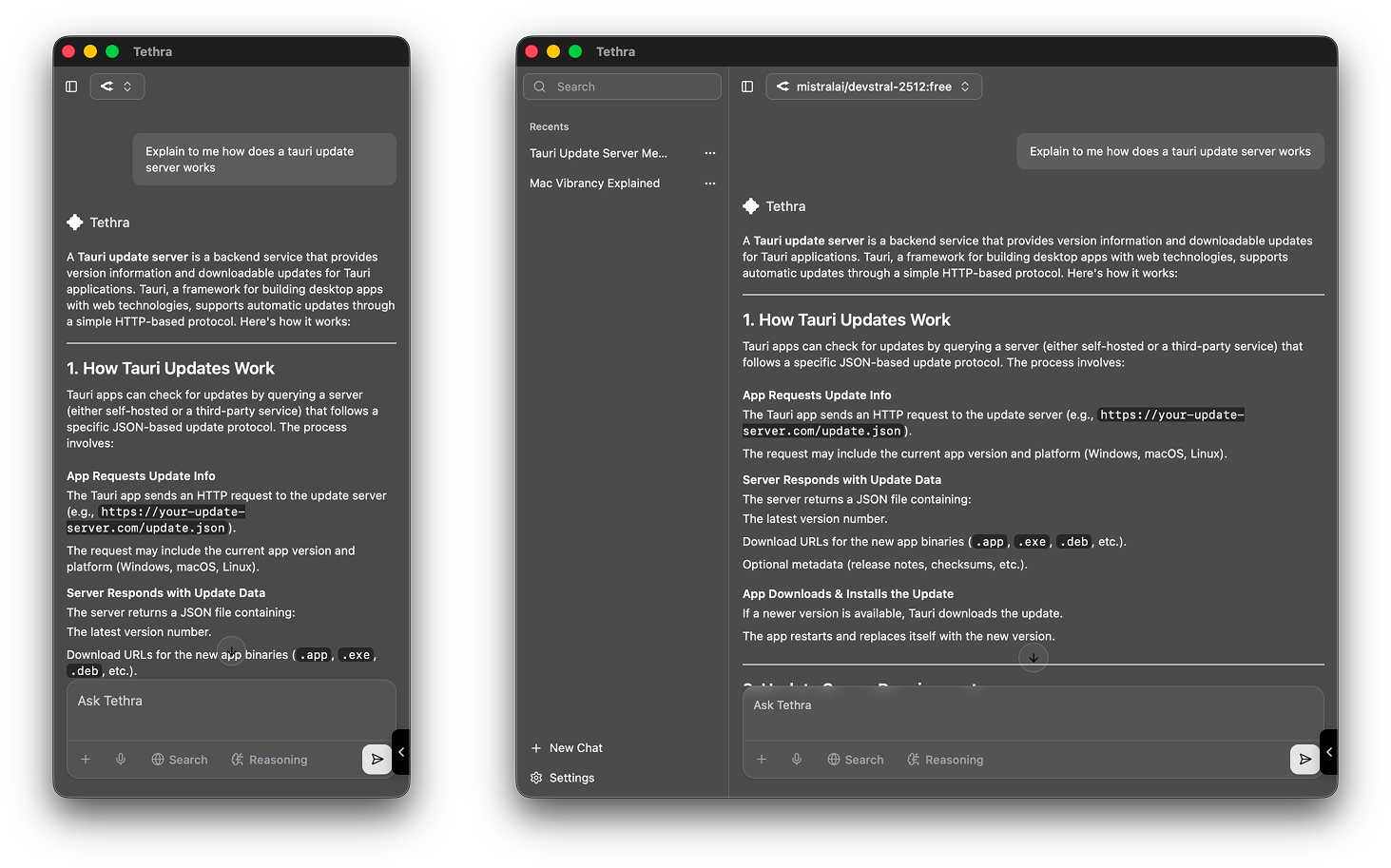

I picked Tauri over Electron because I didn't want to ship a 200MB

app. Tauri uses the system's native webview and a Rust backend, which

brings the binary down to something reasonable. The tradeoff is that

you're writing some Rust, which has a steeper learning curve, but

for the core functionality — file system access, SQLite, IPC — it

wasn't too bad.

React 19 with TypeScript handles the entire frontend. I used TanStack

Router for file-based routing because it felt more natural than

React Router for this kind of app, and the type safety on params and

search is genuinely good. Shadcn/ui gave me a set of accessible

components to build from rather than starting completely from scratch.

Getting multiple providers to work consistently

Every AI provider has a slightly different API. Some stream differently,

some have different auth schemes, some support function calling differently.

The Vercel AI SDK was a lifesaver here — it abstracts away a lot of

that inconsistency and gives you a unified streaming interface. I was

initially skeptical about adding another dependency for this, but it

genuinely saved a lot of time and the library is well maintained.

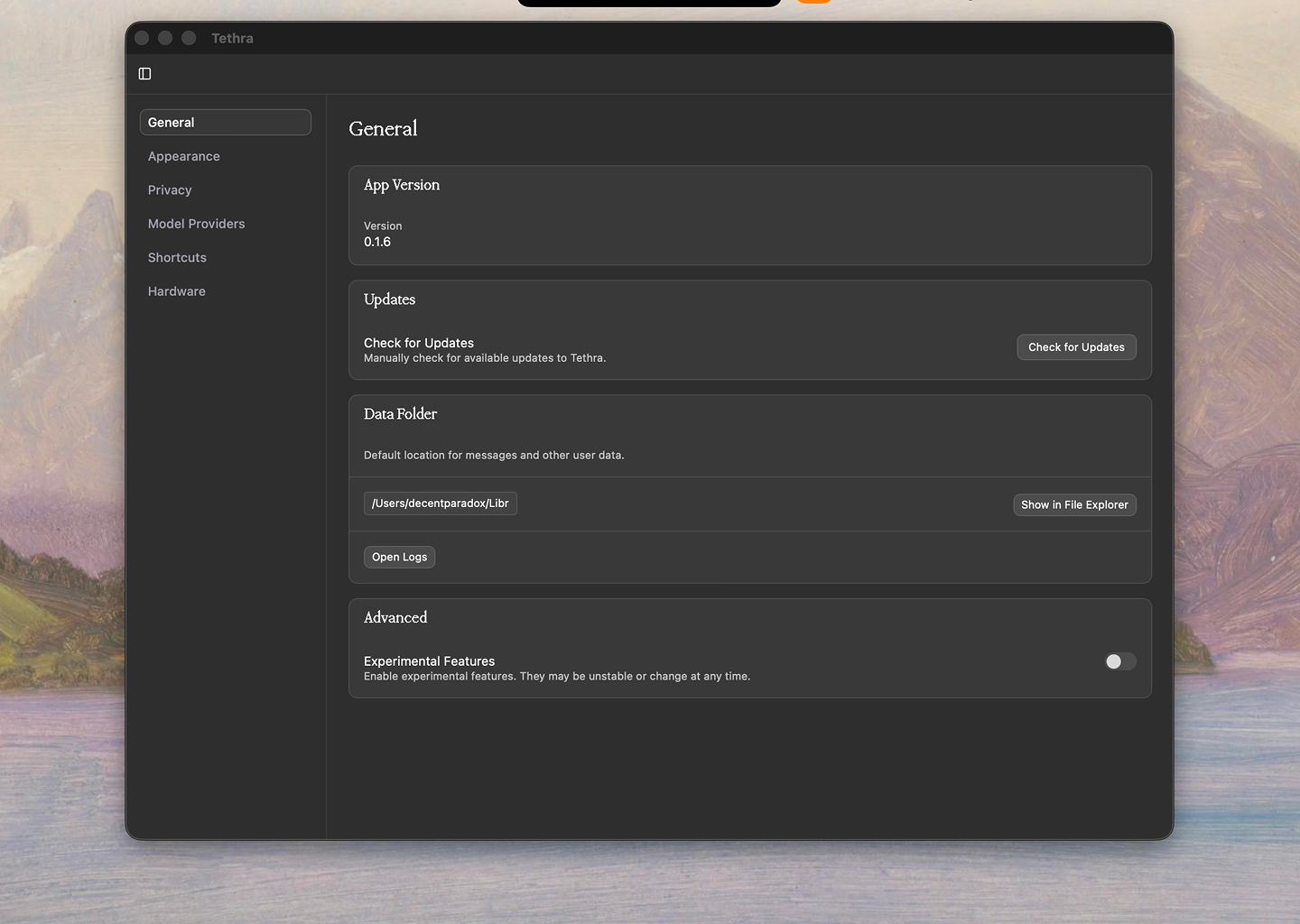

Conversations are stored in SQLite via Tauri's SQL plugin. Each conversation

has an ID, the messages stored as JSON, metadata like model and provider,

and a flag for whether it's been published to the web. This local-first

approach means everything works offline and the user owns their data

completely. I didn't want to run any kind of sync service, so the

simplicity of just writing to a local file felt right.

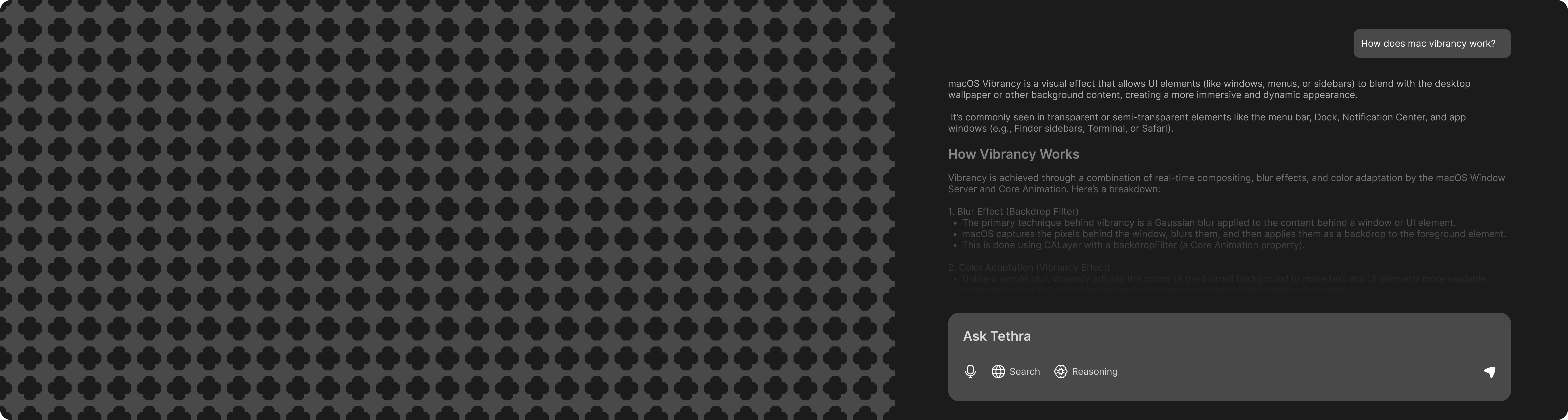

The web sharing part

This was the most interesting problem to solve. Local conversations

are great, but sometimes you want to send someone a link to a useful

exchange you had with an AI. The approach I went with: when you

choose to share a conversation, it gets uploaded to a lightweight

backend that serves it as a static read-only page. The conversation

stays in your local SQLite database — the shared version is just

a snapshot that can be revoked.

The public share page is intentionally simple — just the conversation

rendered cleanly with markdown support. No login required to view it,

and the URL is opaque enough that it won't be guessed. Not a perfect

privacy model, but for casual sharing it works well and doesn't require

the recipient to have an account anywhere.

Reflection:

Tethra pushed me into parts of the stack I hadn't worked with much

before — Rust, SQLite at the application level, and the details of

how streaming AI APIs actually work under the hood. It also made me

think a lot about data ownership and what "local-first" actually

means in practice when you're building for normal users.

Tauri is worth the Rust tax:

The smaller binary size and memory footprint compared to Electron

are real. Writing some Rust for the backend commands took time

to get comfortable with, but the IPC model is clean and the

compiler catches a lot of issues before they become runtime bugs.

I'd reach for Tauri again for any desktop project where

performance and binary size matter.

Abstraction layers save real time:

Using the Vercel AI SDK instead of integrating each provider

directly meant I wasn't reinventing the wheel for every API

difference. The tradeoff is less control over edge cases, but

for most use cases the abstraction is the right call.

Good abstractions exist for a reason — use them.

Local-first is a design commitment, not just a technical one:

Once you commit to local-first, every feature decision flows

from that. The web sharing feature needed careful thought about

what it means to "publish" something from a local database —

the snapshot model turned out to be the right answer.

The architecture of your app shapes what you can and can't

build later — choose it deliberately.